Large-scale Data Collection, Inference, and Integration for Housing Design Studies

Year 2020-2022

Team Robin Roussel (Lead Researcher), Malgorzata Starzynska (Researcher), Sam Jacoby (Principal Research Supervisor), Ali Asadipour (Co-Research Supervisor)

Funder Prosit Philosophiae Foundation; Research Investment Fund, RCA

This project is a collaboration between the Laboratory for Design and Machine Learning and the RCA’s Computer Science Research Centre. It focuses on the large-scale collection, processing, and analysis of data relevant to housing design and planning in London.

There is currently no architectural database integrating visual data and quantitative metrics. While some of this information is already publicly available, it is scattered across different data sources. Such a database will be valuable for evaluating quantitative housing design criteria and outcomes. It can also provide important insights into definitions of housing quality, as these tend to be assessed through quantifiable parameters (minimum floor area, dimensions, thermal performance, etc.).

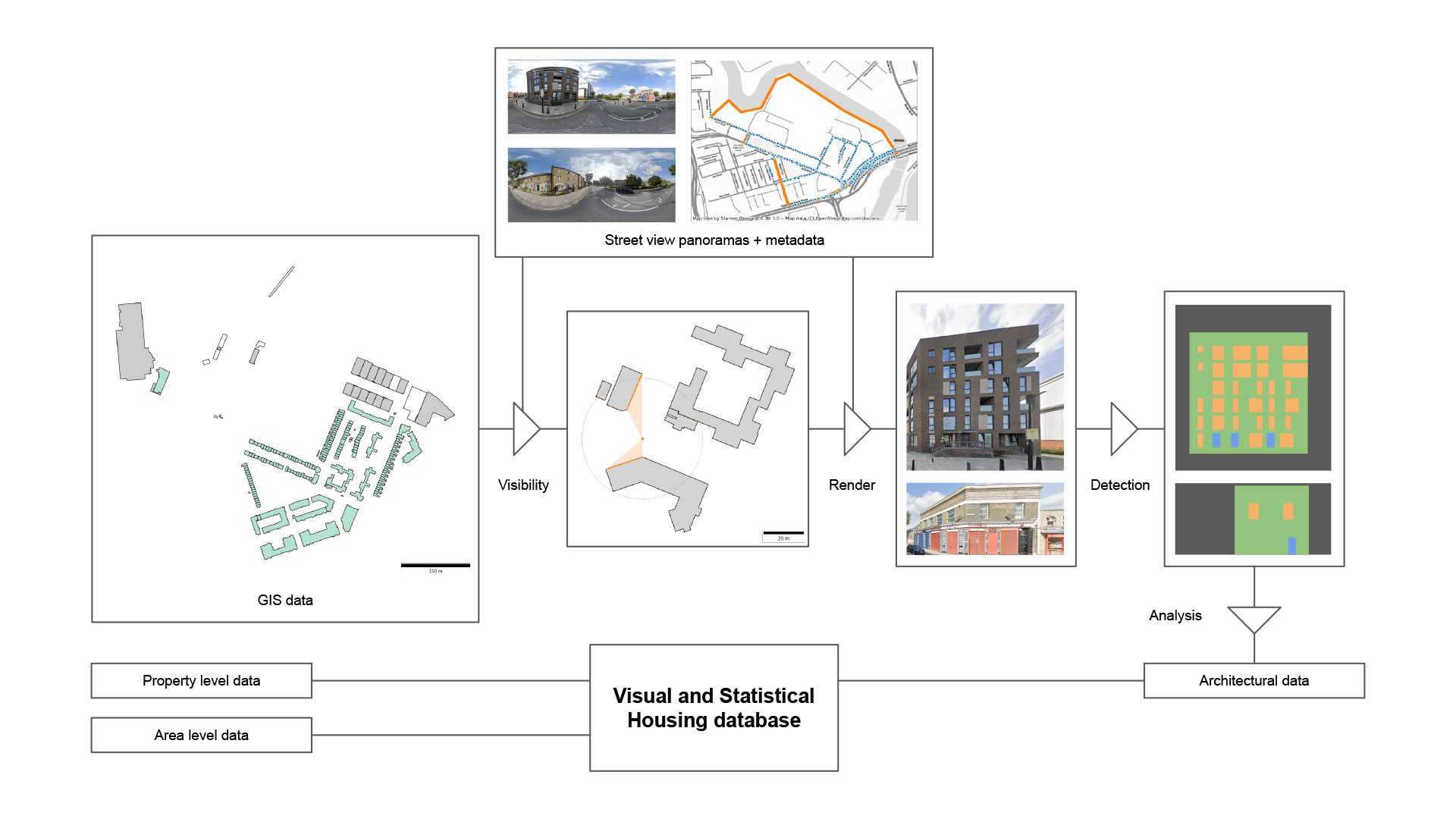

The project develops a novel methodology to automate the large-scale collection and inference of data combining visual and statistical sources on housing in London. The main expected outcome is a database that can inform housing design and development strategy decisions. To demonstrate the use of this database, the project proposes a comparative analysis of the relationships between development control, housing morphologies and urban form, and their evolution over time.

This project involves several challenges. First, image data suffers from perspective distortions and other artefacts (blur, misaligned stitches, etc.) that can only be corrected approximately. Second is the problem of occlusions (e.g., for street-level imagery: trees and cars at the bottom of buildings, overhanging elements at the top). Lastly, visual and statistical data are produced at different spatial and temporal resolutions. While visual information can be collected about individual buildings, the spatial resolution of statistical data may only go as low as groups of buildings. Additionally, visual and statistical data are not acquired simultaneously, and the rhythm of data collection is different and not uniform across London.

The first part of our methodology consists of collecting images from Google Street View, from which we extract facades using a deep convolutional neural network (CNN). These facades are then geo-localised using the view information. A second CNN allows extracting feature elements from facades and their environment: windows, doors, bins, urban furniture, etc. Next, these elements are used to infer qualitative and quantitative attributes relating to architectural and urban design (morphology, presence/absence of elements, extensions, accessibility, energy, relation to the buildings around, etc.). These attributes are integrated with statistical and planning data to form the final database.

Year 2020-2022

Team Robin Roussel (Lead Researcher), Malgorzata Starzynska (Researcher), Sam Jacoby (Principal Research Supervisor), Ali Asadipour (Co-Research Supervisor)

Funder Prosit Philosophiae Foundation; Research Investment Fund, RCA